AI and Sustainability

Overview

This lesson addresses the dual relationship between artificial intelligence and sustainability. Coverage includes the environmental costs that arise from the development and large-scale deployment of AI systems, including direct and indirect contributions to greenhouse gas emissions and resource depletion. Strategies that promote sustainable AI development receive detailed attention, encompassing technical approaches to efficiency gains as well as broader governance and design frameworks. Ethical dimensions encompass accountability for environmental harms, distributional consequences of AI deployment, and normative questions concerning intergenerational and intragenerational equity. Applications of AI that advance climate action receive systematic treatment through representative use cases drawn from energy systems optimization, precision agriculture, and enhanced climate modeling. Specific topics comprise detailed patterns of energy consumption across model training and inference phases together with the operational demands of supporting data centers, hardware-related resource demands that include extraction of rare earth metals and other critical materials, generation and management of electronic waste throughout the AI hardware lifecycle, concrete implementations and quantitative outcomes observed in energy optimization scenarios, agricultural decision-support systems, and surrogate or hybrid climate modeling pipelines, as well as issues linked to climate justice that highlight persistent global inequalities in access to computational resources, quality training data, and the realized benefits and burdens of AI-driven sustainability interventions.

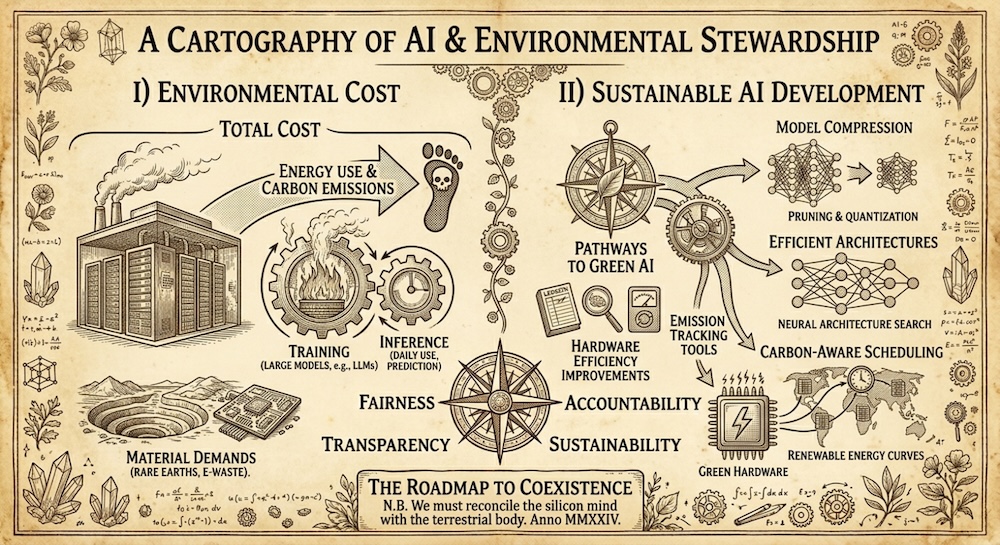

Figure 1 illustrates a detailed cartography of AI & Environmental Stewardship, visually mapping the environmental costs of AI (total cost, material demands) and pathways to sustainable AI development (model compression, efficient architectures, emission tracking tools) within a vintage, diagrammatic framework.

Learning Objectives

- Define the primary environmental impacts associated with training and operating large AI models.

- Quantify energy requirements and carbon emissions for representative AI training runs using established benchmarks.

- Identify techniques for reducing the computational and environmental footprint of AI systems.

- Explain the role of rare earth metals, semiconductor manufacturing, and electronic waste in the AI hardware lifecycle.

- Describe specific AI applications that support sustainability goals in energy, agriculture, and climate modeling.

- Analyze ethical considerations and equity issues arising from the distribution of AI-driven sustainability benefits and burdens.

Motivation

AI systems require substantial computational resources, leading to measurable increases in global electricity demand and greenhouse gas emissions. At the same time, AI methods can optimize resource use and improve predictive accuracy in domains critical to environmental management. Balancing these opposing effects requires systematic evaluation of trade-offs across technical, economic, and social dimensions.

The Impact of AI on the Environment

Training and inference of deep learning models consume substantial electricity, primarily supplied to data centers. A single training run of a large language model can emit carbon dioxide equivalent to the lifetime emissions of multiple passenger vehicles. Inference often dominates lifecycle energy use due to high query volumes, while cooling systems for GPU clusters add significant overhead.

Energy Consumption in Model Training and Inference

Model training and inference phases differ substantially in their energy profiles. Training involves intensive matrix operations over large datasets for extended periods, while inference consists of repeated forward passes that accumulate significant cumulative demand due to high query volumes.

Training the GPT-3 model with 175 billion parameters required an estimated 1287 megawatt-hours of electricity. Under a typical United States grid carbon intensity, this training run alone produced approximately 552 metric tons of CO2 equivalent, comparable to the lifetime emissions of five average passenger vehicles driven 200000 kilometers each, as reported in a University of Michigan analysis of AI training impacts.

Contribution of Data Centers to Global Electricity Demand

Data centers that support AI workloads maintain continuous high utilization. Their electricity consumption represents a measurable and expanding fraction of national and global totals, driven by the proliferation of large-scale GPU and TPU clusters.

According to the International Energy Agency, global data centre electricity consumption reached approximately 415 terawatt-hours in 2024, equivalent to around 1.5 percent of total global electricity demand and with AI-specific workloads forming a rapidly growing share, as detailed in the IEA report on energy demand from AI.

Indirect Environmental Effects Beyond Direct Energy Use

Beyond electricity consumption, AI development drives demand for hardware manufacturing, which involves water use in semiconductor fabrication facilities and land transformation associated with raw material extraction. These upstream processes generate additional environmental pressures that remain separate from operational energy accounting.

Fabrication of a single 300 mm silicon wafer for advanced AI accelerator chips requires approximately 8300 liters of water, including substantial volumes of ultra-pure water for rinsing and cleaning across hundreds of process steps. Scaled across the millions of GPUs deployed in cloud infrastructure, these indirect effects contribute to localized water stress in manufacturing regions such as Taiwan and South Korea, according to an industry analysis of semiconductor water footprints.

Energy Use of AI Models and Data Centers

Energy consumption scales with model size, measured in parameters, and with training dataset volume. Subsequent models have shown continued growth in compute requirements. Data centers hosting AI workloads operate at power usage effectiveness (PUE) values between 1.1 and 1.8. Hyperscale facilities in regions with coal-heavy grids amplify emissions. Renewable energy procurement by operators can lower the carbon intensity, yet intermittency and transmission constraints limit full decarbonization.

Scaling of Energy Consumption with Model Size and Dataset Volume

Energy requirements for training large AI models increase with the number of parameters and the volume of training data. This relationship arises from the quadratic or higher scaling of compute operations in transformer-based architectures.

Training the GPT-3 model with 175 billion parameters required an estimated 1287 megawatt-hours of electricity, generating about 552 tons of carbon dioxide under a typical grid mix, according to estimates cited in the MIT News article on generative AI’s environmental impact.

Growth in Compute Requirements for Subsequent Models

Compute demands for frontier AI models have continued to rise since GPT-3, driven by increases in parameter counts, dataset sizes, and training durations. This trend leads to substantially higher energy use for more recent models.

The power required to train frontier AI models has roughly doubled every six to nine months in recent years, with models such as GPT-4 and successors requiring estimates in the range of tens to hundreds of gigawatt-hours, far exceeding the 1287 MWh used for GPT-3, as documented in analyses of training compute trends.

Power Usage Effectiveness (PUE) in AI Data Centers

Power usage effectiveness (PUE) measures the ratio of total facility energy to the energy delivered to IT equipment. Values for hyperscale AI data centers typically range from 1.1 to 1.8, with leading operators achieving lower figures through advanced cooling and infrastructure design.

Leading hyperscale data centers report fleet-wide PUE values as low as 1.09, compared with an industry average around 1.56, according to Google’s data center efficiency reporting.

Limitations of Renewable Energy Procurement

Operators procure renewable energy through power purchase agreements to reduce carbon intensity. However, the intermittent nature of solar and wind generation, combined with constraints in transmission infrastructure, prevents complete decarbonization of always-on AI workloads.

Although many data center operators have committed to 24/7 carbon-free energy matching, intermittency of renewables and limitations in grid transmission mean that backup or baseload sources are still required, particularly in regions with coal-heavy grids, limiting full decarbonization as noted in discussions of AI energy challenges.

Resource Extraction and Hardware: Rare Earth Metals and E-Waste

AI hardware relies on semiconductors containing rare earth elements such as neodymium, dysprosium, and tantalum. Mining these materials involves habitat disruption, water contamination, and high energy inputs. Server lifespans in AI data centers have shortened due to high utilization and rapid performance demands. Discarded hardware contributes to global e-waste streams. Improper disposal releases heavy metals and persistent organic pollutants into soil and water.

Reliance on Rare Earth Elements in AI Hardware

Rare earth elements appear in permanent magnets for cooling fans, hard disk drives, and other components within servers and GPUs. Neodymium and dysprosium enhance magnetic strength and temperature resistance in these systems.

Neodymium constitutes approximately 30 percent of NdFeB permanent magnets by weight. Typical permanent magnet systems in server cooling fans contain 50-150 grams of NdFeB material per unit, creating substantial aggregate demand across hyperscale facilities, as detailed in an analysis of rare earth supply chain risks in AI infrastructure.

Environmental Impacts of Rare Earth Mining

Extraction and processing of rare earth elements require large volumes of water and chemicals. Operations generate toxic waste, including radioactive tailings, that can contaminate surrounding soil and groundwater.

Every tonne of rare earth mined generates up to 2000 tonnes of toxic waste, including radioactive materials. Processing in regions such as Ganzhou, China, has caused severe soil acidification and water contamination through discharge of chemically laden waste into tailings reservoirs, as reported in The rare earths race risks environmental disaster.

Shortened Hardware Lifespans in AI Data Centers

High utilization rates in AI workloads accelerate thermal and electrical stress on GPUs and servers. This leads to faster degradation and more frequent replacement cycles than in traditional data center operations.

GPUs running at 60-70 percent utilization under AI workloads typically survive one to two years, with a maximum of three years, according to statements from a principal generative AI architect at Alphabet cited in Datacenter GPU service life can be surprisingly short.

Contribution to Global E-Waste Streams and Disposal Risks

Rapid hardware turnover in AI infrastructure adds to the volume of discarded electronics. Only a fraction of global e-waste undergoes formal collection and environmentally sound recycling.

In 2022 the world generated a record 62 million tonnes of e-waste, with only 22.3 percent documented as formally collected and recycled. AI-driven data center upgrades are projected to increase this stream further, with improper disposal leading to release of heavy metals such as lead, mercury, and cadmium into soil and water, according to the Global E-waste Monitor 2024.

Sustainable AI Development

Sustainable AI encompasses three dimensions: environmental, social, and economic. Environmental sustainability focuses on minimizing energy, carbon, and material footprints through targeted technical approaches. Techniques include model compression methods such as pruning, quantization, and knowledge distillation, as well as efficient architectures including sparse attention and mixture-of-experts. Carbon-aware scheduling routes training jobs to regions or times with higher renewable availability. Software tools track emissions during development. Hardware efficiency improvements, including specialized accelerators like TPUs and neuromorphic chips, further reduce per-operation energy costs.

Model Compression Techniques

Model compression reduces the size and computational requirements of AI models while preserving performance. Common methods are pruning, which removes redundant weights or connections, quantization, which lowers numerical precision, and knowledge distillation, which transfers knowledge from a large model to a smaller one.

Applying pruning combined with knowledge distillation to the BERT model resulted in a 32.097 percent reduction in energy consumption while maintaining accuracy, precision, recall, and F1-score above 95.9 percent on a sentiment analysis task, as reported in a comparative analysis of model compression techniques for achieving carbon-efficient AI.

Efficient Architectures

Efficient architectures lower compute demands through structural innovations. Sparse attention mechanisms skip unnecessary token interactions, while mixture-of-experts layers activate only a subset of specialized sub-networks for each input.

Mixture-of-experts architectures enable models to scale to trillions of parameters while activating only a small fraction during inference. This approach achieves comparable quality to dense models with substantially lower training and inference costs, with the Switch Transformer demonstrating up to 7 times faster pretraining for equivalent quality, as detailed in a comprehensive survey of mixture-of-experts algorithms.

Carbon-Aware Scheduling

Carbon-aware scheduling dynamically shifts flexible AI workloads to times or locations where electricity has lower carbon intensity, often aligned with peaks in renewable generation.

Carbon-aware scheduling algorithms that use grid forecasts and renewable availability can reduce carbon emissions and energy costs by 5-10 percent for flexible AI workloads, with greater gains during periods of high renewable output, according to simulations of carbon-aware scheduling for AI data center workloads.

Emission Tracking Tools

Emission tracking tools integrate directly into development workflows to measure and report the carbon footprint of code execution in real time.

CodeCarbon is an open-source Python library that tracks energy consumption of CPU, GPU, and RAM during model training or inference and converts these measurements into estimated CO2 emissions based on the local electricity grid intensity, as described on the official CodeCarbon project site.

Hardware Efficiency Improvements

Specialized hardware accelerators optimize energy use for AI workloads. Tensor Processing Units (TPUs) and neuromorphic chips provide higher performance per watt compared with general-purpose GPUs for targeted tasks.

Google TPUs achieved a 3x improvement in carbon-efficiency of AI workloads from the TPU v4 generation to Trillium through hardware design advances, with operational electricity emissions forming the majority of lifecycle impact, according to Google’s sustainability study on TPU hardware.

AI for Climate Action and Associated Justice Considerations

AI systems contribute to climate action through targeted applications in energy systems, agriculture, and climate modeling. These applications deliver measurable efficiency gains and improved predictive capabilities. At the same time, access to high-performance computing and quality training data remains concentrated in high-income countries and large technology firms. Communities most vulnerable to climate impacts often lack infrastructure to benefit from or influence the design of these tools. AI solutions developed primarily in temperate regions may transfer poorly to tropical or arid contexts due to data biases. Extraction of minerals for AI hardware disproportionately affects indigenous territories and low-income nations, while e-waste is frequently exported to regions with weaker environmental regulations, thereby externalizing health and ecological costs.

AI Applications in Energy Systems

Reinforcement learning optimizes building HVAC controls and data center cooling, achieving substantial reductions in energy consumption while preserving comfort or operational parameters. AI also supports renewable energy forecasting to reduce curtailment of wind and solar generation.

DeepMind applied machine learning to Google data centre cooling systems and achieved up to a 40 percent reduction in the energy used for cooling, which translated to a 15 percent reduction in overall Power Usage Effectiveness, as reported in the DeepMind blog on data centre cooling efficiency.

AI Applications in Agriculture

Convolutional neural networks process satellite and drone imagery to identify crop stress, enabling precision irrigation and fertilizer application. Predictive models estimate yields under varying climate scenarios to support adaptation for smallholder farmers.

Convolutional neural networks applied to multispectral satellite imagery enable detection of crop stress and support precision agriculture practices that optimize water and fertilizer use, with studies demonstrating improved accuracy in plot-level monitoring and yield prediction across diverse agricultural regions, as reviewed in A Review of CNN Applications in Smart Agriculture Using Satellite and UAV Imagery.

AI Applications in Climate Modelling

AI surrogate models emulate computationally intensive physics-based simulations at lower cost. Graph neural networks capture atmospheric dynamics, generative models perform downscaling from global to regional resolutions, and hybrid approaches integrate differentiable physics with learned components to enhance long-term forecast accuracy.

Graph neural networks serve as surrogate models that approximate complex climate simulations, reducing computational demands while maintaining fidelity for tasks such as downscaling coarse global projections or predicting extreme events, as explored in projects on Graph Convolutional Neural Networks as Surrogate Models for Climate Simulation.

Global Inequalities and Climate Justice in AI-Driven Solutions

Concentration of computational resources and data limits participation by vulnerable communities. Data biases reduce the effectiveness of AI tools in non-temperate regions. Hardware-related extraction and waste streams impose disproportionate burdens on indigenous and low-income populations.

AI development and deployment for sustainability often exacerbate climate injustices because high-performance computing and high-quality training data are concentrated in high-income countries, while communities in the Global South face disproportionate climate impacts yet lack infrastructure to benefit from or shape AI solutions, as discussed in the World Economic Forum article on ensuring climate justice in the age of AI.

Burdens of Hardware Supply Chains

Mining of rare earth elements and other critical materials for AI hardware affects indigenous territories and low-income nations. E-waste generated by short hardware lifespans is frequently exported to regions with limited regulatory oversight.

Rapid growth in AI infrastructure increases demand for rare earth elements and generates substantial e-waste. Much of this waste is exported to countries with weaker environmental standards, externalizing pollution and health risks to communities in the Global South, as examined in analyses of AI and the critical minerals crunch.

Common Limitations Observed in Practice

Reported energy figures for model training vary with assumptions about grid carbon intensity and scope of included infrastructure. Reproducibility of efficiency claims is limited by proprietary hardware and undisclosed optimizations. Many sustainability interventions focus narrowly on operational energy while neglecting embodied carbon in hardware manufacturing. Scalability of AI-driven solutions is constrained by data availability in underrepresented regions and by the rebound effect, whereby efficiency gains stimulate increased overall consumption.

Variability in Reported Energy and Carbon Figures

Energy and carbon estimates for AI training depend heavily on the assumed electricity grid mix, the boundaries of system scope, and the inclusion or exclusion of infrastructure overhead such as cooling and networking.

Published estimates for training a single large language model can differ by a factor of two or more depending on whether a global average grid intensity or a specific regional mix is applied, and whether only direct GPU energy or the full facility footprint is considered, as highlighted in comparative reviews of AI carbon accounting methods.

Limited Reproducibility of Efficiency Claims

Many efficiency improvements reported in research or industry announcements rely on proprietary hardware configurations or undisclosed optimization techniques, making independent verification difficult.

Claims of substantial energy savings from new model architectures or training methods are often based on internal benchmarks that cannot be reproduced externally due to lack of access to the exact hardware stack and software stack details, as noted in critiques of reproducibility in sustainable AI research.

Narrow Focus on Operational Energy

A large proportion of sustainability efforts in AI target only the energy consumed during training and inference, while the embodied carbon associated with semiconductor manufacturing, mining of raw materials, and equipment disposal receives limited attention.

Lifecycle assessments of large AI models show that embodied emissions from hardware manufacturing can account for 20-50 percent of total carbon footprint, yet most public sustainability reports and academic papers continue to emphasize operational electricity use alone.

Constraints on Scalability and the Rebound Effect

Even when AI solutions demonstrate local efficiency gains, their broader scalability is limited by sparse high-quality data in many regions of the Global South and by the rebound effect, in which efficiency improvements lead to greater overall resource consumption.

Improvements in energy efficiency of AI systems for smart grids or precision agriculture can result in expanded deployment and higher total energy demand, offsetting part or all of the intended environmental benefits, a phenomenon documented in energy economics literature on AI-enabled efficiency programs.

Summary

AI systems impose measurable environmental costs through energy use, carbon emissions, and material demands. Counterbalancing strategies include algorithmic efficiency improvements, renewable-powered infrastructure, and lifecycle assessment. AI applications in energy, agriculture, and climate modeling offer pathways to mitigate environmental degradation, yet their benefits are unevenly distributed. Addressing ethical and justice dimensions requires explicit consideration of global inequalities in both the burdens and gains of AI technologies.